Heuristics have a reputation of being a somewhat cheap replacement for true knowledge. However, they do not only form in integral part of human decision making, but also can be shown to outperform complex probabilistic decision tools under certain circumstances. This article tries to give a simple and intuitive introduction into the fascinating world of heuristic thinking, while proposing that humans often act rationally in the real world.

Today I want to start with a basic topic in decision making: the notion of heuristics. I guess most of the readers will have heard of heuristic thinking and have a certain conception of what heuristics are about. Most probably it will be something like:

Heuristics are fast and simple rules that allow us to make approximate inferences and decisions in cases where we lack necessary information or time. They are simplifications of more complex and accurate decision rules. Due to their intuitive nature, they often mislead us. This is why it is always preferable to use the correct decision rule based on all available data if possible

While this definition definitely catches some of the properties of heuristics, it also contains several misconceptions. Let us have a look at the different points mentioned above.

Heuristics are simple rules that allow us to make approximations and decisions very fast, and they are usually simplifications that omit several aspects of the problem at hand. However, do they often mislead us? If you share the opinion that most people have about heuristics your answer is probably yes. Especially if you have read the popular book by Daniel Kahneman: “Thinking, Fast and Slow” (1) (which is a must-read if you are interested in decision making). It is an impressive collection of psychological experiments conducted over several decades within the last century. Kahneman and his co-worker Tversky (who both earned the “The Sveriges Riksbank Prize in Economic Sciences in Memory of Alfred Nobel 2015” for their work) were interested in biases people possess when they have to make decisions. Most problems involved a certain extent of probabilistic thinking and the correct solution was usually logically well defined. An example for the type of (easy) questions they asked is the following (freely reinterpreted for this article):

Linda is thirty-one years old, single, outspoken, and very bright. She majored in philosophy. As a student, she was deeply concerned with issues of discrimination and social injustice, and also participated in antinuclear demonstrations.

Which alternative is more probable?

a) Linda is a bank teller

b) Linda is a bank teller and is active in the feminist movement

Pretty obvious right? She simply must be active in a feminist movement! Sadly this answer is not correct, which is pretty obvious when considered from a probabilistic point of view. The probability that Linda is a bank teller and working in a female rights group can never be higher than the probability of her being just a bank teller. For visualization, simply think of a sample of 100 bank tellers. All of them are bank tellers, but most likely not all of them will be enthusiastic about gender equality. Even if a lot of bank tellers who have the same characteristics as Linda will be joining a female rights group, it will certainly never be more than the total number of bank tellers. This is a demonstration of what Kahneman calls the “conjunction fallacy” (1). The story about Linda just makes too much sense for us to reject it. We can assume that biases like this are often based on prejudice and gossip. Indeed, it seems pretty stupid to follow your gut-feeling in this case. But let us now reframe the problem a bit:

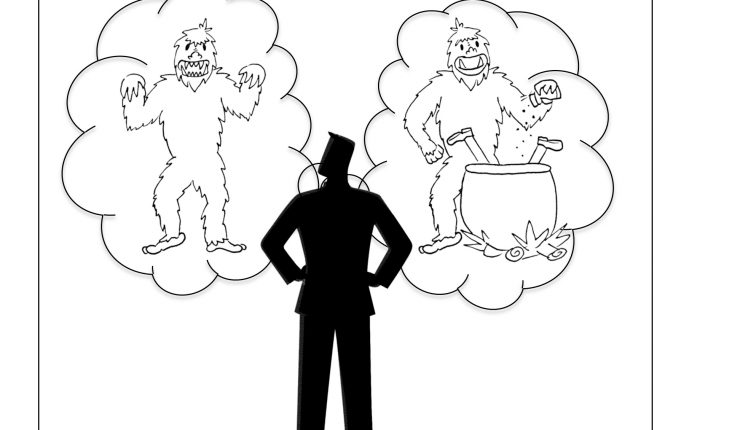

Imagine that after quitting your job as a manager you are wandering through the Bhutanese Himalaya on an endless trip of self discovery. Suddenly, just after you leave the forest to head for the summit, a giant Yeti appears in front of you. He has big mouth with an impressive array of sharp and long teeth and residues of fabric and blood on his large claws.

Which reasonable assumption do you make about the Yeti?

a) It is a pretty big white fellow who feeds on insects and mushrooms

b) It is a pretty big white fellow who feeds on insects, mushrooms, squirrels, dears, Bhutanese monks and former managers, the latter he usually devours slowly cooked in sauce made from the finest herbs the Himalaya can offer, served on a bed of fresh bamboo seeds.

Although in this example the first answer is again correct from a probabilistic point of view (it is indeed quite unlikely that the Yeti does not know that managers actually taste best with rice and soy sauce), it is also pretty clear what the smarter assumption is if you do not want to risk to get eaten (if you have read the article about Antifragility: which assumption reduces your Black Swan exposure?). How is it possible that a bias, which is usually considered as negative, suddenly yields the smarter decision? The answer to this question sheds light on a distinct property of heuristic, which is called “ecological rationality” of heuristics, a term which was coined by the German psychologist and heuristics expert Gerd Gigerenzer (2). In a nutshell, it means that the “smartness” of a heuristic depends on the environment where and for which it was developed. Going back to our example above, it is clear that most of the time while our ancestors’ brains were shaped, they were more likely confronted with a decision as to whether a certain aspect of their environment presented a threat to their survival. It is ok to freak out a bit occasionally if it helps you to avoid a mistake that could be fatal (note: watch the asymmetry of the return distribution. Instead of predicting the rare event, we reduce our exposure to it). So we could argue that this heuristic is useful in an environment that is hard to predict and where fatal errors should be avoided. Although this seems pretty obvious, biases like this are often taken as an example to explain why humans make stupid decisions and to demonstrate the inability of humans to reason properly. “Correct”, you may say, “but this is just the usual cave man bullshit. In our modern world, there is no need to flee from saber tooth tigers all the time. So then making a logical decision based on probabilities and reasoning should always be superior to primitive instincts!”.

Indeed, there is usually no need to run for your life nowadays, at least if you stay away from the Bhutanese Himalaya. So if you want to score high on Kahneman’s brain teasers, you should pay close attention to your probability and statistics classes. There is something else that distinguished Kahneman’s experiments from many situations in real life: the correct answer is well defined and can be described by probabilistic language. For instance, how do you estimate the probability that this awesome sweater will still be in the shop if you return two hours later? What is the probability that you will not regret abandoning your default dish in your favorite restaurant to pick the exotic recommendation of your friend? Of course, you can try to perform some arcane calculations that will certainly yield a number in the end (if you are lucky it will be between 0 and 100%). You will most likely rely simply on your gut feeling, which will often give a satisfactory answer.

Besides the fact that thinking in probabilities is often not feasible, there are situations where heuristics lead to a better answer even with a lot of data and a complicated probabilistic model. Gigerenzer and his co-researchers (for a nice summary of their early research check out the book “Simple Heuristics that Make us Smart”) (2). Here we will refer to one example investigated by Gigerenzer; given the names of two cities or some other clues about them, which one is the larger one? Surprisingly, if we use a heuristic that most people would intuitively apply to the problem, we can even beat machine learning algorithms (this has been investigated, for instance, in Gigerenzer’s paper: Homo Heuristicus: Why Biased Minds Make Better Inferences) (3). The heuristic is very simple:

if you know only one of the two cities, assume that this one is larger,

or alternatively,

if one of the cities has a soccer team in the major league, assume it is larger.

It is important to note here that we look at the predictive ability of the heuristic. The machine learning algorithm will usually perform quite well on the training set (i.e. some indicators and the correct answers to train the algorithm) but poorly in out-of-sample prediction (technically speaking, the generalization error is high, which is a standard measure for the predictive ability of a machine learning algorithm). Gigerenzer shows a variety of other examples where very simple decision rules outperform more sophisticated probabilistic algorithms (4). Since using less information in those cases seems to be beneficial to the quality of the estimate, he calls this the “less-is-more-effect”. Gigerenzer gives a technical explanation for the less-is-more-effect, which is based on the “bias-variance trade-off”. Since this term is probably not familiar to readers without a formal education in statistics or machine learning, let me just briefly summarize it as follows:

“the error of an estimate depends on two quantities called bias and variance”.

While the first one measures how well our model fits the data (the lower the bias, the better the fit), the second one kind of describes how vulnerable our model is to fluctuations in the data, which is relevant if only little data is available or if it is unreliable. Since it is usually not possible to reduce both of them at the same time, heuristics improve the model by introducing a bias in exchange for a lower variance. In a sense, this increases the stability of our prediction in an uncertain environment (watch again the relation to Antifragility: by accepting inferior predictions in most occasions, we avoid making a very severe mistake). To come to a conclusion, we can finally see that even the last sentence in our initial statement can be wrong under certain circumstances.

I hope this article could give you a glimpse into the fascinating world of heuristics, and how humans use them to make smart and fast decision. In particular, I wanted to exemplify that the often quoted irrationality of humans can actually be very rational if we consider decision making in the type of scenario in which human intuition was forged: the real world.

Johannes Thiele

References:

- Kahneman, D., “Thinking, Fast and Slow”, 2012, Penguin

- Gigerenzer, G., and Todd, P.M., “Simple Heuristics that Make us Smart”, 1999, Oxford University Press

- Gigerenzer, G., and Brighton, H., “Homo Heuristicus: Why Biased Minds Make Better Inferences”, 2009

- Gigerenzer, G., “Risk Savvy: How to Make Good Decisions”, 2015, Penguin

Leave a Reply